On 20 November, Marta Kwiatkowska accepted the Royal Society Milner Award in London, which was given in honour of her contributions to the theoretical and practical development of stochastic and quantitative model checking. The presentation of the award, which is given annually for 'outstanding achievement in computer science by a European researcher' and is supported by Microsoft Research, was accompanied by her lecture, 'When to trust a self-driving car,' which was webcast live. The video of the lecture is now available here.

Marta Kwiatkowska gave an invited talk at the 29th International Conference on Computer Aided Verification in Heidelberg, Germany, entitled "Safety Verification of Deep Neural Networks." The slides can be viewed here and the video is available here.

Robots are becoming members of our society. Complex algorithms have been making robots increasingly sophisticated machines with rising levels of autonomy, enabling them to leave behind their traditional work places in factories and to enter our society with convoluted social rules, relationships, and expectations. Driverless cars, home assistive robots, and unmanned aerial vehicles are just a few examples. As the level of involvement of such systems increases in our daily lives, their decisions affect us more directly. Therefore, we instinctively expect robots to behave morally and make ethical decisions. For instance, we expect a firefighter robot to follow ethical principles when it is faced with a choice of saving one's life over another's in a rescue mission, and we expect an eldercare robot to take a moral stance in following the instructions of its owner when they are in conflict with the interest of others (unlike the robot in the movie "Robot & Frank"). Such expectations give rise to the notion of trust in the context of human-robot relationship and to questions such as "how can I trust a driverless car to take my child to school?" and "how can I trust a robot to help my elderly parent?" In order to design algorithms that can generate morally-aware and ethical decisions and hence creating trustworthy robots, we need to understand the conceptual theory of morality in machine autonomy in addition to understanding, formalizing, and expressing trust itself. This is a tremendously challenging (yet necessary) task because it involves many aspects including philosophy, sociology, psychology, cognitive reasoning, logic, and computation.

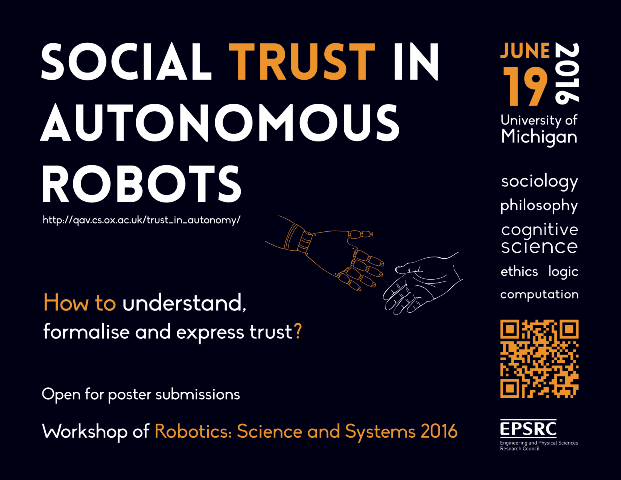

In this workshop, we try to continue with the discussions initiated in our RSS 2016 workshop on "Social Trust in Autonomous Robots" with the additional theme of ethics and morality to shed light on these multifaceted concepts and notions from various perspectives through a series of talks and panel discussions.

Part of Robotics Science and Systems (RSS) 2017, to be held at the Massachusetts Institute of Technology, Cambridge, MA, USA.

Marta Kwiatkowska was among a small group of members of the Department of Computer Science to give a talk at the Hay Festival to members of the public. Her talk is available to watch or listen here.

"Model checking and strategy synthesis for mobile autonomy: from theory to practice" (slides and video here), invited lecture at the Uncertainty in Computation Workshop, Simons Institute, 4-7 October 2016.

"Safety and trust for mobile autonomy" (view on YouTube here) - invited lecture at IntelliSys'16, London, 21-22 September 2016.

Marta Kwiatkowska gave an invited lecture entitled "Model checking and strategy synthesis for mobile autonomy: from theory to practice" at the ICALP 2016 conference this month. See here for the slides and here for the paper.

Marta Kwiatkowska gave a semi-plenary lecture entitled "Model checking and strategy synthesis for mobile autonomy: from theory to practice" at the ECC 2016 conference this month. See here for the slides and here for the paper.

We invite researchers attending RSS 2016, and others, to participate in this workshop focused on trust in autonomy.

This workshop will include a mix of invited talks, panel discussions, and contributed poster presentations. Poster submissions are encouraged from researchers and students wishing to give a poster presentation on any topic within the theme of the workshop.

The main goal of this workshop is to shed light on the little-understood notion of trust in autonomy from various perspectives. We have therefore invited a number of experts from both academia and industry, whose works focus on the intersection of the field of technology with sociology, philosophy, ethics, or logic, to give their views on the topic through talks and panel discussions.

For further information, visit the workshop homepage.

Email the organisers for any comments or questions.

This workshop is supported by the EPSRC.